What is the difference between hierarchical regression and multiple regression

Since a conventional multiple linear regression analysis assumes that all cases are independent of each other, a different kind of analysis is required when dealing with nested data. … Hierarchical regression, on the other hand, deals with how predictor (independent) variables are selected and entered into the model.

What is the difference between regression and multiple regression?

The major difference between them is that while simple regression establishes the relationship between one dependent variable and one independent variable, multiple regression establishes the relationship between one dependent variable and more than one/ multiple independent variables.

What are hierarchical regressions?

Hierarchical regression is a way to show if variables of your interest explain a statistically significant amount of variance in your Dependent Variable (DV) after accounting for all other variables. This is a framework for model comparison rather than a statistical method.

What is hierarchical multiple regression used for?

Hierarchical Multiple Regression models was used to examine the relationship between eight independent variables and one dependent variable to isolate predictors which have significant influence on behavior and sexual practices.What is the major difference between simple regression and multiple regression quizlet?

A) Simple regression uses more than one dependent and independent variables, whereas multiple regression uses only one dependent and independent variable.

What is the difference between hierarchical regression and stepwise regression?

In hierarchical regression you decide which terms to enter at what stage, basing your decision on substantive knowledge and statistical expertise. In stepwise, you let the computer decide which terms to enter at what stage, telling it to base its decision on some criterion such as increase in R2, AIC, BIC and so on.

What is difference between R-Squared and adjusted R squared?

Adjusted R-Squared can be calculated mathematically in terms of sum of squares. The only difference between R-square and Adjusted R-square equation is degree of freedom. … Adjusted R-squared value can be calculated based on value of r-squared, number of independent variables (predictors), total sample size.

Why multiple regression is better than simple regression?

A linear regression model extended to include more than one independent variable is called a multiple regression model. It is more accurate than to the simple regression. … The principal adventage of multiple regression model is that it gives us more of the information available to us who estimate the dependent variable.What are the assumptions of hierarchical regression?

Assumptions for Hierarchical Linear Modeling Normality: Data should be normally distributed. Homogeneity of variance: variances should be equal.

What is the difference between correlation and regression?The main difference in correlation vs regression is that the measures of the degree of a relationship between two variables; let them be x and y. Here, correlation is for the measurement of degree, whereas regression is a parameter to determine how one variable affects another.

Article first time published onWhat is the general form of a multiple regression model?

The multiple regression equation explained above takes the following form: y = b1x1 + b2x2 + … + bnxn + c. … Then, from analyze, select “regression,” and from regression select “linear.”

Can adjusted R squared be greater than 1?

mathematically it can not happen. When you are minus a positive value(SSres/SStot) from 1 so you will have a value between 1 to -inf. However, depends on the formula it should be between 1 to -1.

What are the three types of multiple regression?

There are several types of multiple regression analyses (e.g. standard, hierarchical, setwise, stepwise) only two of which will be presented here (standard and stepwise). Which type of analysis is conducted depends on the question of interest to the researcher.

What are the advantages of multiple regression?

Multiple regression analysis allows researchers to assess the strength of the relationship between an outcome (the dependent variable) and several predictor variables as well as the importance of each of the predictors to the relationship, often with the effect of other predictors statistically eliminated.

What is R Squared in regression?

R-squared (R2) is a statistical measure that represents the proportion of the variance for a dependent variable that’s explained by an independent variable or variables in a regression model.

What are the four assumptions of multiple linear regression?

Therefore, we will focus on the assumptions of multiple regression that are not robust to violation, and that researchers can deal with if violated. Specifically, we will discuss the assumptions of linearity, reliability of measurement, homoscedasticity, and normality.

Does multiple regression assume normality?

Multiple linear regression analysis makes several key assumptions: … Multivariate Normality–Multiple regression assumes that the residuals are normally distributed. No Multicollinearity—Multiple regression assumes that the independent variables are not highly correlated with each other.

What should be in a hierarchical regression table?

For example, a hierarchical regression might examine the relationships among depression (as measured by some numeric scale) and variables including demographics (such as age, sex and ethnic group) in the first stage, and other variables (such as scores on other tests) in a second stage.

What is moderated hierarchical regression analysis?

Moderation. Hierarchical multiple regression is used to assess the effects of a moderating variable. To test moderation, we will in particular be looking at the interaction effect between X and M and whether or not such an effect is significant in predicting Y.

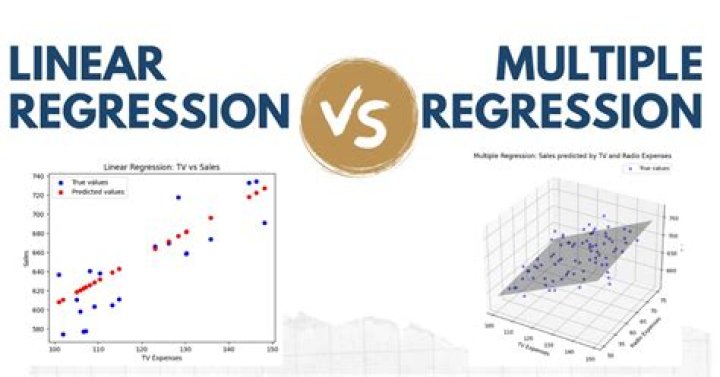

What is the difference between linear and multiple regression analysis?

Linear regression attempts to draw a line that comes closest to the data by finding the slope and intercept that define the line and minimize regression errors. If two or more explanatory variables have a linear relationship with the dependent variable, the regression is called a multiple linear regression.

How do you know which regression model is better?

- Adjusted R-squared and Predicted R-squared: Generally, you choose the models that have higher adjusted and predicted R-squared values. …

- P-values for the predictors: In regression, low p-values indicate terms that are statistically significant.

What is the difference between B and beta in regression?

According to my knowledge if you are using the regression model, β is generally used for denoting population regression coefficient and B or b is used for denoting realisation (value of) regression coefficient in sample.

What are the main differences between regression and correlation explain your answer with examples?

Regression describes how an independent variable is numerically related to the dependent variable. Correlation is used to represent the linear relationship between two variables. On the contrary, regression is used to fit the best line and estimate one variable on the basis of another variable.

What are b1 and b2 in multiple regression?

Each line is represented with a different set of b0 (Y intercept), b1 (Slope for Month) and b2 (Slope for Adv.

What does MS mean in regression?

Mean Squared Errors (MS) — are the mean of the sum of squares or the sum of squares divided by the degrees of freedom for both, regression and residuals.

What are R Squared and RMSE?

Both RMSE and R2 quantify how well a regression model fits a dataset. The RMSE tells us how well a regression model can predict the value of the response variable in absolute terms while R2 tells us how well a model can predict the value of the response variable in percentage terms.